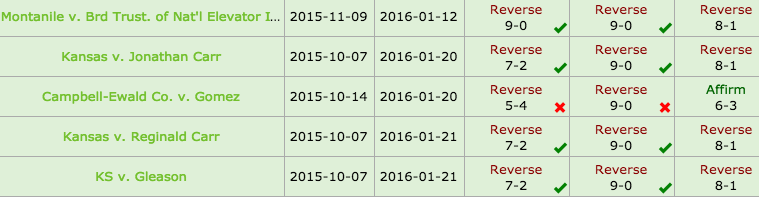

Today the Court decided five cases (although three of them were consolidated). Both the Crowd and the Algorithm accurately predicted the outcomes in 5 out of the 6 cases. Although, neither the Crowd nor the Algorithm got the splits in any of the cases.

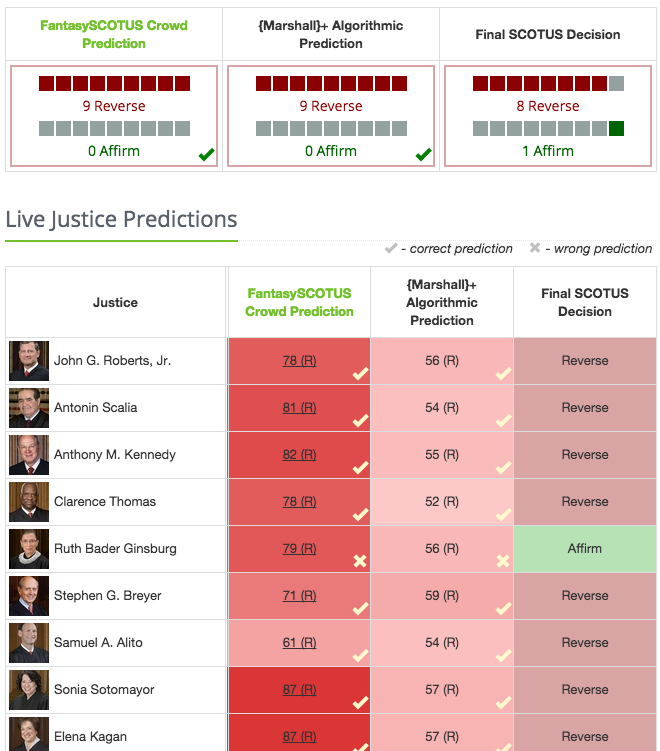

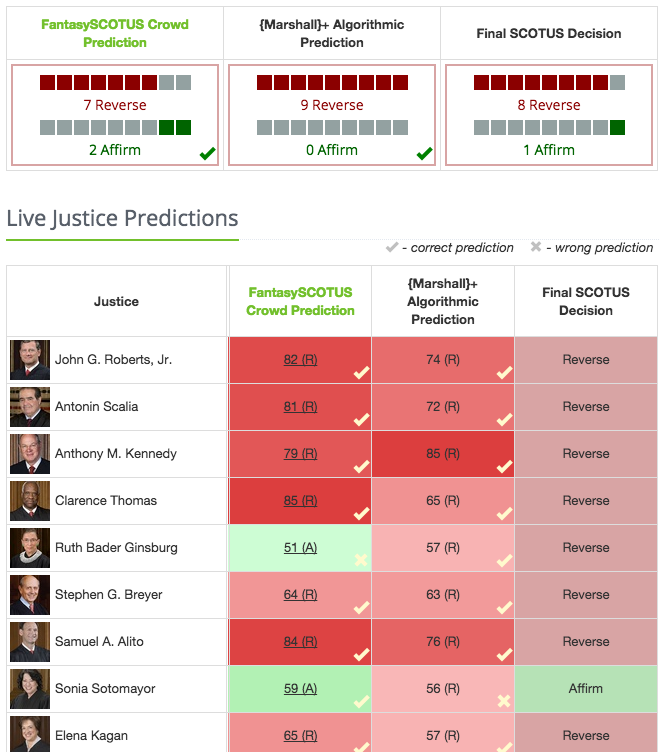

In Montanile v. Brd Trust. of Nat’l Elevator Ind. (ERISA), the Court reversed 8-1, with only Justice Ginsburg in dissent.

Not a single user predicted an 8-1 reversal, and picked up on Justice Ginsburg’s dissent, even though she dissented on a substantially similar case in 2003. As her dissent today noted:

Not a single user predicted an 8-1 reversal, and picked up on Justice Ginsburg’s dissent, even though she dissented on a substantially similar case in 2003. As her dissent today noted:

What brings the Court to that bizarre conclusion? As developed in my dissenting opinion in Great-West Life & Annuity Ins. Co. v. Knudson, 534 U. S. 204, 224–234 (2002), the Court erred profoundly in that case by reading the work product of a Congress sitting in 1974 as “unravel[ling] forty years of fusion of law and equity, solely by employing the benign sounding word ‘equitable’ when authorizing ‘appropriate equitable relief.’” Langbein, What ERISA Means by “Eq- uitable”: The Supreme Court’s Trail of Error in Russell, Mertens, and Great-West, 103 Colum. L. Rev. 1317, 1365 (2003).

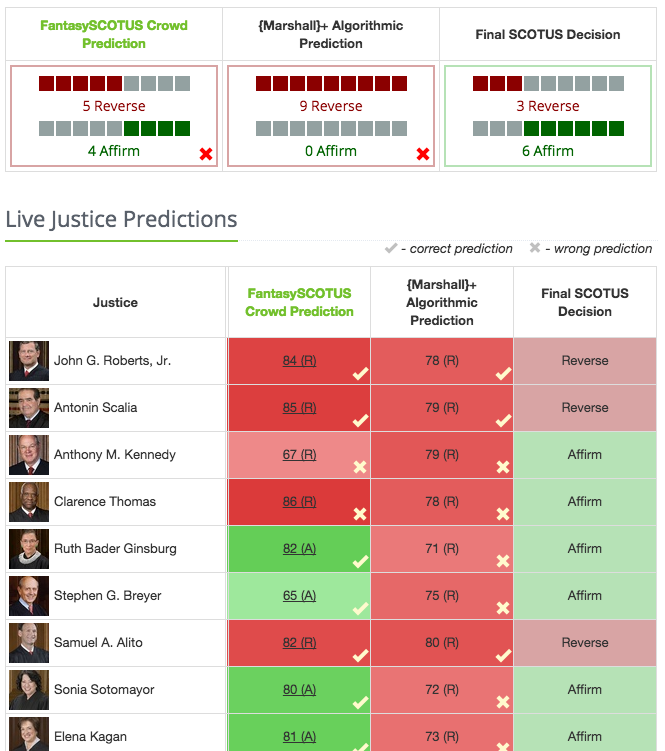

Second, in Campbell-Ewald Co. v. Gomez (Class action case) the Court affirmed 6-3. We totally missed this one. The outcome was 6-3 Affirm. Both the crowd and the algorithm predicted a reversal.

Again, no one accurately predicted this split.

The final three consolidated cases Kansas v. Jonathan Carr, Reginald Carr, and Gleason concerned a death penalty jury instruction case. The outcome was 8-1 Reverse. Both the crowd and algorithm predicted the outcome, but neither got the correct split. Individual users, however, correctly predicted Justice Sotomayor would be alone in dissent.

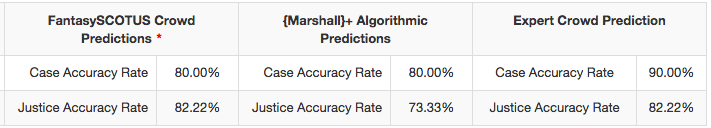

With 10 cases decided, the FantasySCOTUS crowd and algorithm have each correctly predicted 80% of the cases. However, the Justice accurate rate is much higher for the crowd. As for the Expert Crowd, they nailed 90% of the cases so far.

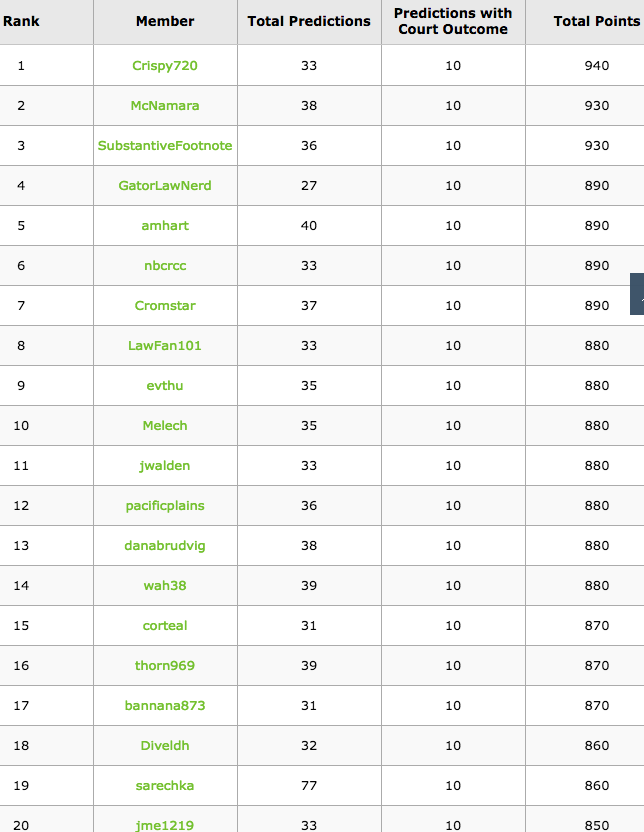

And here is the ranking for the top 20.