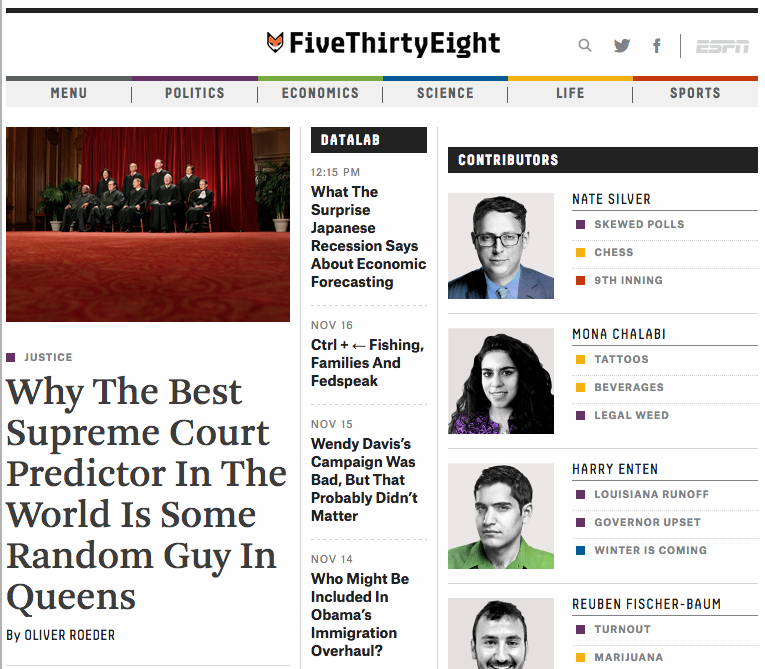

I am not athletic. I never fathomed that anything I would ever do could possibly be featured on ESPN. But it has happened. FiveThirtyEight has a detailed profile on Supreme Court prediction making, FantasySCOTUS, {Marshall}+, and our three-time reigning champion Jacob Berlove.

On FantasySCOTUS:

Blackman also launched the website FantasySCOTUS in 2009 — as a joke. Like fantasy sports, human players log on, pick justices to vote this way or that, and score points once the decisions come down. To Blackman’s surprise, it took off, and thousands of people now participate.

The real promise of FantasySCOTUS isn’t entertainment, but prediction. Like the Iowa Electronic Markets, or the sadly defunct Intrade, FantasySCOTUS can harness the wisdom of the crowd — incentivizing its participants to make accurate predictions, and then observing those decisions in the aggregate. This year there is a $10,000 first prize put up by the media firm Thomson Reuters.

“I had no idea whether it’d be accurate or not — I did this entirely on a whim. And then by the end of the year I found out that this is actually pretty good,” Blackman said. The serious FantasySCOTUS players generate predictions cracking 70 percent accuracy.

On {Marshall}+:

Josh Blackman, a professor at South Texas College of Law, has recently taken up the predicting mantle. Blackman and his partners, Michael Bommarito and Daniel Martin Katz, have built a second-generation computer model, called {Marshall}+, after former Chief Justice John Marshall. While it may be slightly less accurate than the Martin-Quinn model, it’s far more robust. It’s built on data going back more than 60 years, and doesn’t rely on a given composition of the court, as the Martin-Quinn model did. The model goes beyond Martin’s and Quinn’s classification trees, employing what the authors call a “random forest approach.” They can even “time travel,” testing their model on historical cases, using only the information they would have had at the time. It correctly predicts 70 percent of cases since 1953. And it’s public — the source code for {Marshall}+ is available on GitHub.

While the model has impressed many, Blackman still believes in human reasoning. “We expect the humans to win, they’re better,” he told me. “This is not like ‘The Terminator’ where machines will rise.”

On our accuracy:

“I had no idea whether it’d be accurate or not — I did this entirely on a whim. And then by the end of the year I found out that this is actually pretty good,” Blackman said. The serious FantasySCOTUS players generate predictions cracking 70 percent accuracy.

Blackman is excited to find out what sort of cases the humans are good at predicting, and what sort the machines are good at. With that information, he can begin to craft an “ensemble” prediction, using the best of both worlds. …

Machine predictors are bound to improve. Blackman hopes to host a machine competition next year, encouraging others to work off {Marshall}+and develop their own, better algorithms that can crack 70 percent. And in the long run, the real impact of this work may not have much at all to do with the Supreme Court. With an accurate algorithm in hand, predictions could be generated for the tens of thousands of cases argued every year in lower courts.

“There are roughly 80 cases argued in the Supreme Court every year. That’s a drop in the bucket,” Blackman said.

This could have dramatic, efficiency-boosting effects. Lawyers could better decide when to go to trial, when to settle and how to settle, for example. But there’s a long way to go.

On Chief Justice Jacob Berlove:

So there are the scholars and the machines and the crowd. Composing the crowd are the hobbyists — the intrepid, rugged individualists of the predicting world.

Jacob Berlove, 30, of Queens, is the best human Supreme Court predictor in the world. Actually, forget the qualifier. He’s the best Supreme Court predictor in the world. He won FantasySCOTUS three years running. He correctly predicts cases more than 80 percent of the time. He plays under the name “Melech” — “king” in Hebrew.

Berlove has no formal legal training. Nor does he use statistical analyses to aid his predictions. He got interested in the Supreme Court in elementary school, reading his local paper, the Cincinnati Enquirer. In high school, he stumbled upon a constitutional law textbook.

“I read through huge chunks of it and I had a great time,” he told me. “I learned a lot over that weekend.”

Berlove has a prodigious memory for justices’ past decisions and opinions, and relies heavily on their colloquies in oral arguments. When we spoke, he had strong feelings about certain justices’ oratorical styles and how they affected his predictions.

Some justices are easy to predict. “I really appreciate Justice Scalia’s candor,” he said. “In oral arguments, 90 percent of the time he makes it very clear what he is thinking.”

Some are not. “To some extent, Justice Thomas might be the hardest, because he never speaks in oral arguments, ever.”1 That fact is mitigated, though, by Thomas’s rather predictable ideology. Justices Kennedy and Breyer can be tricky, too. Kennedy doesn’t tip his hand too much in oral arguments. And Breyer, Berlove says, plays coy.

“He expresses this deep-seated, what I would argue is a phony humility at oral arguments. ‘No, I really don’t know. This is a difficult question. I have to think about it. It’s very close.’ And then all of sudden he writes the opinion and he makes it seem like it was never a question in the first place. I find that to be very annoying.”

I told Ruger about Berlove. He said it made a certain amount of sense that the best Supreme Court predictor in the world should be some random guy in Queens.

“It’s possible that too much thinking or knowledge about the law could hurt you. If you make your career writing law review articles, like we do, you come up with your own normative baggage and your own preconceptions,” Ruger said. “We can’t be as dispassionate as this guy.”

Berlove also referenced the current supremacy of the best humans over the machines. “There’ll probably be a few top-notch players up there who can do better” than the computer model, he said. But he added, “With time, they might be able to do what they did to Garry Kasparov, or what they did to Ken Jennings,” referring to IBM’s Deep Blue and Watson supercomputers.