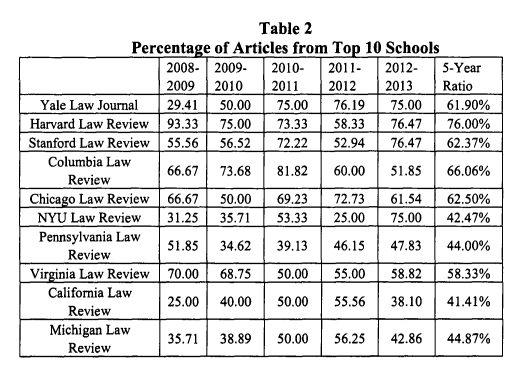

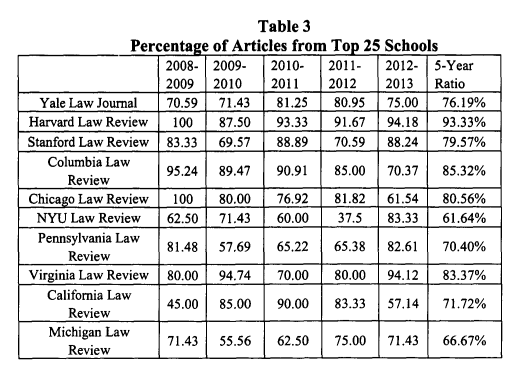

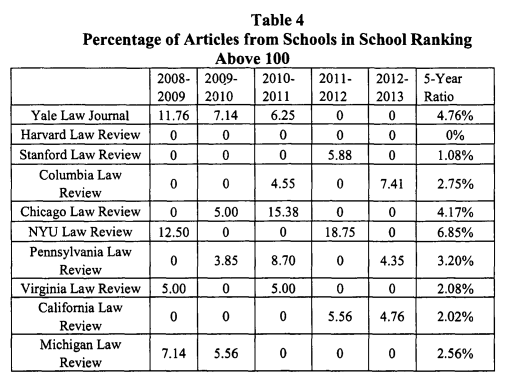

As the August submission window draws to a close, and angst turns to sorrow, two recent studies should be of concern to anyone submitting articles from lower-ranked law schools. The first article, via Paul Caron, is titled Scholarly Incentives, Scholarship, Article Selection Bias, and Investment Strategies for Today’s Law Schools. The article demonstrates that the top 10 law reviews publish articles, almost exclusively by professors from the top 10 law schools. Professors at schools outside the top 100 have virtually no chance of publishing in a top-tier journal

These numbers speak for themselves.

This pattern does create something of a vicious cycle. Upward mobility in academia is tied closely to publishing in top-tier journals. But publishing in those journals is tied to submitting from a top-tier institution. It’s possible to break the cycle, but it is the rare exception, rather than the rule. And, taking a step back, one of the most important credentials in getting a teaching job is what law school one attended–it is the first line on the FAR. Historically, virtually all law professors graduated from the same handful of law schools whose professors successfully place articles. And so on.

Some may counter that a peer-review system would eliminate the letter-head bias. Not so much. An article in Behavior and Brain Sciences provides an interesting test to see how blind peer review is:

A growing interest in and concern about the adequacy and fairness of modern peer-review practices in publication and funding are apparent across a wide range of scientific disciplines. Although questions about reliability, accountability, reviewer bias, and competence have been raised, there has been very little direct research on these variables.

The present investigation was an attempt to study the peer-review process directly, in the natural setting of actual journal referee evaluations of submitted manuscripts. As test materials we selected 12 already published research articles by investigators from prestigious and highly productive American psychology departments, one article from each of 12 highly regarded and widely read American psychology journals with high rejection rates (80%) and nonblind refereeing practices.

With fictitious names and institutions substituted for the original ones (e.g., Tri-Valley Center for Human Potential), the altered manuscripts were formally resubmitted to the journals that had originally refereed and published them 18 to 32 months earlier. Of the sample of 38 editors and reviewers, only three (8%) detected the resubmissions. This result allowed nine of the 12 articles to continue through the review process to receive an actual evaluation: eight of the nine were rejected. Sixteen of the 18 referees (89%) recommended against publication and the editors concurred. The grounds for rejection were in many cases described as “serious methodological flaws.” A number of possible interpretations of these data are reviewed and evaluated.

In other words, the *exact same* articles that were already published, were resubmitted with lower-ranked letter-heads. And they were almost all rejected. This may rebut one possible criticism–that articles from professors at lower-ranked institutions are less-deserving of top-ranked publication slots.

This shouldn’t be news to anyone.